Mux is a library for building autonomous AI agents. It handles the think-act loop, tool execution, LLM provider abstraction, and multi-agent coordination so you can focus on defining tools and business logic instead of wiring up state machines.

Available in two implementations:

- mux (Go) —

go get github.com/2389-research/mux - mux-rs (Rust) —

mux = "0.10"in yourCargo.toml

Both share the same architecture and feature set. Pick whichever language fits your stack.

What it does

Multi-provider LLM abstraction. One client interface covers Anthropic (Claude), OpenAI, Google Gemini, OpenRouter, and Ollama. Swap providers by changing a constructor. All five support streaming and tool calling.

Universal tool interface. Every capability — built-in functions, MCP server tools, external services — implements the same tool interface: name, description, approval requirement, execution. Register tools in a thread-safe registry and the orchestrator handles the rest.

Permission-gated approvals. Tools declare whether they need approval on a per-call basis. The executor checks an approval function before running anything sensitive. Context-sensitive: a write tool can require approval while a read tool runs freely. The Rust implementation adds a full policy engine with allow/deny rules, glob patterns, and conditional functions.

MCP client. Connects to external MCP servers over stdio or streamable HTTP. Lists tools, calls them via JSON-RPC 2.0, and adapts them into the universal tool interface automatically.

Agent hierarchies. Parent agents spawn children with restricted tool access. Denied tools accumulate down the tree. Each agent gets a hierarchical ID and fires lifecycle hooks at spawn and completion.

Async execution and persistence. Run agents asynchronously with polling, bounded waits, and cancellation. Save conversation history as JSON or JSONL transcripts, then restore them to resume where you left off.

How it works

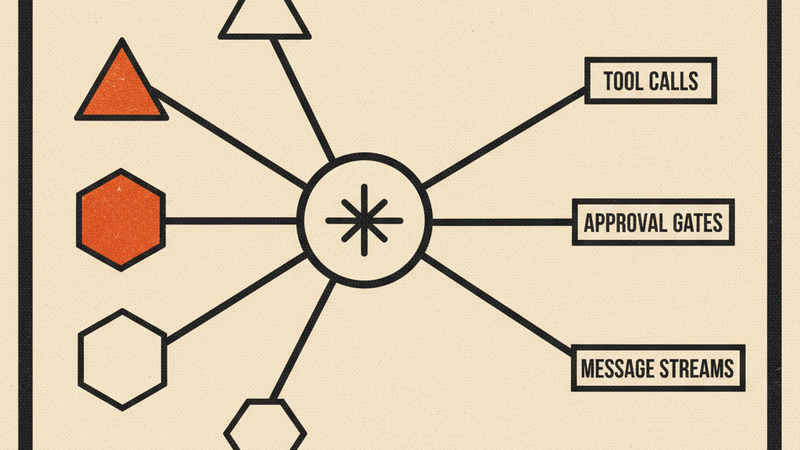

Three layers:

- LLM clients — Provider clients that normalize different APIs into a single request/response format with streaming, token tracking, and configurable defaults.

- Orchestrator — The think-act loop. Calls the LLM, parses tool use from the response, executes tools through the executor, feeds results back, and repeats until the model stops calling tools. Fires hooks at each stage. Compacts conversation history when it gets too long.

- Agent — Wraps the orchestrator with per-agent tool filtering, child agent management, async execution, and transcript persistence. Pre-built presets (Explorer, Planner, Researcher, Writer, Reviewer) cover common patterns.

Supporting packages: tool registry and executor, MCP protocol integration, rule-based access control, shared resource locking across agents, and lifecycle event hooks.

Rust extras

The Rust workspace ships additional crates:

- mux-ffi — UniFFI bindings for Swift (iOS/macOS) and Kotlin (Android)

- code-agent — a reference agent implementation

- agent-test-tui — interactive TUI for testing agent behavior

Requirements

Go: Go 1.24+ and at least one LLM API key (ANTHROPIC_API_KEY, OPENAI_API_KEY, or GEMINI_API_KEY)

Rust: Rust 2024 edition (1.85+), tokio runtime, and API keys for your chosen LLM provider