If your test passes but the real system doesn’t work, your test is lying to you. This plugin enforces testing against real dependencies — actual services, actual data, no mocks. It auto-triggers when you write tests, validate features, or mention mocking, and redirects you toward scenarios that prove the system works.

Install

/plugin marketplace add 2389-research/claude-plugins

/plugin install scenario-testing

What it does

The plugin enforces one rule: no feature is validated until a scenario passes with real dependencies.

When you ask Claude Code to write tests, this skill intercepts and steers you toward the scenario approach. Instead of unit tests with mocked dependencies, you write scenario files in a .scratch/ directory that hit real services — real databases (test instances), real auth providers (sandbox mode), real file storage (test buckets). Sandbox and test modes count. Mocks don’t.

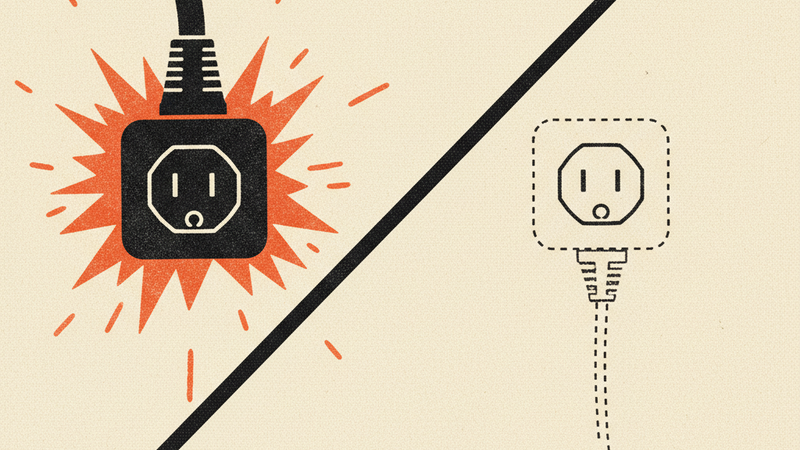

The truth hierarchy frames the philosophy:

- Scenario tests (real system, real data) — truth

- Unit tests (isolated logic) — useful but not proof

- Mocks — lies hiding bugs

A mocked test tells you your code called the right function with the right arguments. A scenario test tells you the thing actually works.

Each scenario runs standalone. No ordering dependencies between them. Every scenario sets up its own test data, runs against real services, and cleans up after itself. You can run them in parallel and they’ll work in CI/CD without flaky sequencing issues.

Rationalizations the skill rejects:

- “Just a quick unit test…” — unit tests don’t validate features

- “Too simple for end-to-end…” — integration breaks simple things

- “I’ll mock for speed…” — speed doesn’t matter if tests lie

How it works

Two artifacts, two lifecycles.

.scratch/ files are temporary scenario scripts. Write them in whatever language fits the task. They live in .scratch/ which is gitignored — they never get committed. These are your working tests, the ones that hit real services and prove things work.

# .scratch/test-auth.py

def test_user_can_register_and_login():

user = register_user(email="test@example.com", password="s3cure")

assert user.id is not None

token = login(email="test@example.com", password="s3cure")

assert token is not None

delete_user(user.id) # cleanup

scenarios.jsonl is the permanent record. When a scenario passes and the pattern is worth keeping, you extract it as a one-line JSON entry. This file is committed — it describes what should work without containing the throwaway test code.

{"name":"user-auth","description":"Register and login with real auth service","given":"New credentials","when":"User registers then logs in","then":"Valid token returned","validates":"Auth flow"}

Write in .scratch/, run against real services, verify it passes, extract the pattern to scenarios.jsonl, move on. Scratch files accumulate during development and get cleaned up whenever. The JSONL file grows as a living spec of what the system can do.